Reservoir modeling: revising uncertainty quantification and workflows

Linda Gaasø

Hue

Arne Skorstad

Tone Kraakenes

Roxar

Oil and gas companies always strive to make the best possible reservoir management decisions, a task that requires them to understand and quantify uncertainties and how these uncertainties are affecting their decisions. In attempting to ascertain risk, however, great uncertainty pertaining to the geological data and thus the description of the reservoir, as well as time-consuming workflows, are current bottlenecks impeding the ability of oil and gas companies to address their business needs.

New technologies and approaches enable faster, more accurate, and more intuitive modeling to help today's geophysicists make better decisions.

Earth modeling software has come a long way since its appearance in the 1980s. Reservoir modeling has become common practice in making economic decisions and assessing risk related to a reservoir during several stages of the reservoir's life, using detailed modeling to investigate the reserve estimates and its uncertainty.

Despite the progress, conventional reservoir modeling workflows are optimized around oil paradigms developed in the 1980 and 1990s. The workflows are typically segmented and "siloed" within organizations, relying on a single model that becomes the basis for all business decisions for reservoir management. These models are not equipped to quantify the uncertainty associated with every piece of geological data (from migration, time picking, time-to-depth conversion, etc.). The disjointed processes may take many months – from initial model concepts to flow simulation – for multiple reasons, one of which is the outmoded technologies used to drive these processes. When faced with the challenges of increasingly remote and geologically complex reservoirs, conventional workflows can fall short.

There are two main types of data used in reservoir modeling, data from seismic acquisition and drilling data (well logs). Geophysicists typically handle the data and interpretation, and geomodelers try to turn those interpretations into plausible reservoir models. This time-consuming and resource-intensive approach requires strict quality control and multiple iterations. Data is often ignored, or too pretentious interpretations are made based on poor seismic data, which narrows the possibility of obtaining a realistic model.

Interpreters still frequently have to rely on manual work and hand editing of fault- and horizon-networks, usually causing the reservoir geometry to be fixed to a single interpretation in the history-matching workflows. This approach makes updating structural models a major bottleneck, both due to the lack of methods and tools for repeatable and automatic modeling workflows, and for efficient handling of uncertainties.

As a result of the traditional manual work and time-consuming workflows, some oil fields go as long as three years between modeling efforts, even though the average rate of progression on drilling is a few meters per day. With traditional modeling, it takes several days or even weeks to construct a complete reservoir model, and at least several hours to partially update it. Earth models currently comprise dozens of surfaces and hundreds of faults, which means users cannot and should not update an earth model completely by hand. Instead they should rely on software that allows for (semi-) automated procedures, freeing the user from certain modeling tasks.

Instead of a traditional serial workflow for interpretation and geomodeling, Roxar pioneered "model-driven interpretation." This allows geoscientists to guide and update a structural 3D model, which is geologically consistent, directly from the data. This approach allows geoscientists to focus efforts where the model needs more details. In some areas, such as on a horizon depth, neighboring data tell the same story; they are all representative. In other areas, for example close to faults, some data points are more important than others, namely those indicating where the faults create a discontinuity horizon depth. Geoscientists should focus on these critical data points, instead of on data not altering the model. In the extreme, having a correlation of 1.0 between two variables imply that keeping one of these is sufficient, as the other value is known, and it does not matter which of these the geoscientists choose to keep. In practice, however, correlations between data generally are not 1.0, leading most geoscientists to ask: at what correlation, or redundancy level, should we simplify our data model, and thereby save time and money in updating it by omitting this extra data? The modeling efforts in the oil and gas industry should be purpose-driven. This is the key behind model-driven interpretation.

A model-driven approach

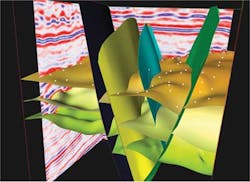

As shared earth models require resolution ranging from pore- to basin-scale, all aspects of data management, manipulation, and visualization need to straddle this enormous gap. Seismic data sets consume tens of gigabytes of disk space, but these are highly structured data sets that technologies such as HueSpace, coupled with NVIDIA GPUs, can read and process at gigabytes per second. Complex geologic models and reservoir grids may be far less structured, with representations such as polyhedrons or a mixed representation. Supporting all these types of data, including interactive manipulation, is non-trivial.

Substantial computing power is required to run the software tools efficiently for interactive visual interpretation of geophysical data. Most current technologies do not support this kind of on-site analysis and require models to be sent to a data center for processing, causing delays in the interpretation workflow as high-performance computers process the data before delivering visual interpretations back to the field. Technology is needed that can enable on-site interpretation and processing of seismic data into rendered 3D models, and current technologies are limited in their ability to achieve this.

Recently a team of Lenovo, Magma, and NVIDIA engineers combined to address this challenge. Working with the Norwegian-based technology company Hue, the team created a solution that combines powerful Lenovo ThinkStation workstations with NVIDIA GPU accelerators and Magma's high-speed expansion system to bring the computing power needed for interpretation to the field, to reduce the time required to render accurate and complex models dramatically. In doing so, the team brought interactive high-performance computing technology to the geophysicists' workstations.

The ability to manipulate and interpret huge amounts of data with these new technologies allows faster and more dynamic analysis in near real-time at workstations. The thousands of processing cores and fast DDR5 memory of NVIDIA GPUs – originally created for graphics processing – provide the processing power needed for these data sets. Software development frameworks, such as HueSpace, are developed to use this power to process high volumes of seismic data in seconds. This allows for interactive visualization of terabytes, or even petabytes, of data to create models that help identify subsurface prospects and help engineers make better decisions.

Quantifying uncertainty

Oil and gas reservoirs are found at depths of hundreds to thousands of meters, making their physical access limited, and the collection and modeling of data challenging. Seismic acquisition technology is only able to capture a portion of the earth response in a seismic image.

To determine the commercial viability of a prospect the uncertainty of the available data needs to be quantified. This traditionally involves relying on a single model, or scenario, instead of stochastic models driven by the uncertainty and resolution of the data.

Considering the uncertainty aspect of the data, flexible models are required that can be updated in a timely manner as new data become available. Such data can be from well logs, core samples, or new seismic. However, with the current high drilling activity, the increasing use of permanent sensors for monitoring pressure and flow rates, and developments in 4D seismic monitoring, the amount of data being produced for input to the reservoir model has increased substantially. This means models need to be updated both more frequently and from more diverse data sources. The updated models are important to evaluate new and improved oil recovery measures, future well prospects, and other critical functions.

Given the uncertainty in the geophysical and geological domain regarding data and resolution, a reservoir model will not completely match the actual measured flow data of the reservoir. Hence, history-matching has been introduced to alter simulation models to better represent the actual flow rate and pressure measured in a well. Since the simulation model usually has a coarser resolution than the fine-scaled geological model, changes made (frequently by hand during history-matching) often are not incorporated into the geological model. A paradigm change is coming as a more full- or closed-loop history-matching gets increased attention by many companies. A full update of the model requires automated workflows where the model is the key, instead of manual, subjective decisions based on dubious erroneous, data. Such a closed-loop approach seeks to mend the broken chain of information where the true data in the flow simulation domain carries value to the model.

The need to constantly update models to address uncertainty requires a software architecture with computational tools that support interactive visual interpretation and integration of geophysical data to produce a structural model of the reservoir in a timely manner. Working with large-scale reservoir models, which today feature multi-million-cell unstructured grids, to a greater extent honors geological features (e.g., complex faults, pinchouts, fluid contacts) and engineering details (e.g., wells), coupled with demands for advanced accuracy for simulations, challenge the underlying technology capabilities. The technology must cope with both structured and unstructured grids for the reservoir model, and with fully coupled wells and surface networks. HueSpace's approach to this offers a visualization-driven and "lazy" compute framework, in the sense that it only fetches and computes the absolute minimum data required, for maximum interactive performance. This approach, which uses NVIDIA GPU technologies, allows both structured and unstructured grids to be edited interactively without relying on level-of-detail approaches to achieve high interactivity.

Achieving high-performance computing while processing stochastic models in the field can be challenging, but Lenovo, Magma, NVIDIA, and Hue demonstrate that it is possible by combining current technologies.

By capturing the uncertainty at the beginning of the geoscience workflow, operators can gain a greater understanding of the subsurface risks and have the best possible foundation for drilling decisions.

Conclusion

The pressure is on oil and gas companies to make smart and economic decisions to maximize reservoir recovery. Current commercially available tools and workflows do not adequately address the need for making the best economic decisions and for assessing risk related to a reservoir. Limitations result in rigid models that are not flexible when it comes to incorporating new data, even though updated models leads to new knowledge and a better understanding of the reservoir.

Technology to support a better reservoir understanding is available. Operators can continuously update models everywhere in the workflow, from seismic to simulation. A combination of powerful hardware, an intelligent visualization-driven framework for computation and data-management, and a model-driven software approach to interpreting and modeling workflows can properly support reservoir modeling demands.

Contributing authors: Chris McCoy, Lenovo ThinkStation; Jim Madeiros, Magma; and Ty McKercher, NVIDIA