Gene Kliewer

Technology Editor, Subsea & Seismic

Independent simultaneous sourcing is a revolution for marine seismic data acquisition, quality, and efficiency. It is revolutionary in terms of its business impact as well.

In the Gulf of Mexico 10 to 15 years ago, exploration activity and even discoveries below the salt began in earnest. Some of these early discoveries were drilled on "notional" maps without the kind of seismic detail available using today's technology. Consequently, further appraisal and production of those discoveries required a better understanding of the geological structures.

Good examples are the deepwater GoM subsalt discoveries. As part of its R&D attack to improve its geophysical understanding of these discoveries, BP pursued scientific investigation of everything to do with the seismic imaging, data processing, velocity model construction, etc. One conclusion was that the data itself was not sufficient.

One solution BP pioneered in 2004-2005 was the notion of wide-azimuth towed streamer data acquisition. The first such application was at Mad Dog in the GoM. The discovery was important to BP, but the geologic images possible at the time were deficient for production planning. The company used numerical simulation, high-performance computing, and other scientific knowledge and experience to simulate a novel way to acquire much denser data, many more traces per square kilometer, and much bigger receiver patches than those from a standard towed-streamer configuration. The result was to separate the shooting vessels from the streamer vessels, and to sail over the area multiple times to collect data. That is what BP called "wide-azimuth, towed-streamer seismic."

It did what it was designed to do – improve the data and bring out the structural elements of the field.

A few years ago BP had some large exploration licenses in Africa and the Middle East in areas with little or no available seismic data. Typical grids of 2D shot in the 1970s and 1980s obviously did not use modern exploration and appraisal processes. BP wanted higher-quality data to enable higher-quality decisions. The question became how to acquire data of the desired quality in the time allowed and over the large areas at an economically reasonable cost.

The company decided no existing technology would meet these criteria. Getting the areas covered within a very aggressive time line would require some sacrifice in quality. To get the quality needed, the process required more time than was available and also raised the acquisition costs.

In devising ways to meet the challenge, BP took a look at work it had done onshore with collecting data non-sequentially. Drawing upon this experience and applying it offshore resulted in BP assigning each source vessel to run its assigned lines whenever it was ready and to do it as fast as possible. There was no effort made to synchronize the source vessels. Using this plan, the recording vessel was "on" all the time and made continuous recordings.

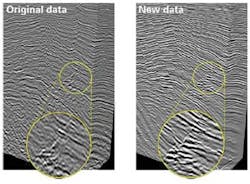

BP already was an aggressive user of ocean-bottom data collection. Narrow-azimuth, towed-streamer data densities can be in the 50 to 150 fold order. Using sparse ocean-bottom cable (OBC) data with lines typically on opposing grids 500 m (1,640 ft) apart can result in 300 to 400 fold data sets. Some results of the non-sequential acquisition ran into the 1,000-plus fold range. The image quality improved, often a lot.

Using this technique, there actually is a technical reason the signals need to be desynchronized. The trick becomes separating the different shots. The data that comes back to the recording devices is mixed because the signals from the source vessels are concurrent. Looking at the raw returns, data from independent simultaneous source (ISS) is messy. Unscrambling the data is the key. It requires special algorithms in a process BP refers to as "deblending" to make each source free of interference from every other source. BP holds several patents on how to do this.

The company's computer capacity makes this possible. The recent addition of a super computer to its Houston facility gave BP the capacity to process more than 1 petaflop (quadrillion) floating point calculations per second. The high-performance computing center has more than 67,000 CPUs, and the company expects to be able to process up to 2 petaflops by 2015. The memory is 536 terabytes and the system has 23.5 petabytes of disk space.

The ISS acquisition technique was tested on a North Sea field. By taking the acquisition to the ocean bottom, leverage increased for getting more data with more efficiency. The test showed the need for still more data density, and that came with very dense sampling of receivers. OBC gives freedom of source points. Ocean-bottom nodes are pressurized cylinders that hold recording equipment. Shooting vessels can travel almost anywhere they want, depending upon surface infrastructure. That is improved, even, without the necessity to tow streamers. With this method, the limits are set by the number of ocean-bottom receivers available and the speed with which the source vessels can travel the assigned grids.

BP did a test run of the ISS data collection method in the North Sea at the Macar field, and that testing showed that the technique would work in getting higher-quality data sets faster and cheaper than with traditional means. The company shot one patch of data in the North Sea two times, once using single-source wide-azimuth acquisition and once using ISS with two source vessels. The results were nearly identical.

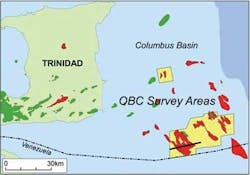

BP's biggest use of ISS so far came in Trinidad where the company operates a very large production license. BP decided it was worth the effort to determine if there was any remaining production potential. The existing seismic data was vintage late 1990s and consisted of towed-stream, narrow-azimuth returns. That was sufficient for the early development of the Trinidad fields, but more than a decade later the company wanted a finer degree of resolution and much greater detail. The Trinidad holdings have several fields with multiple stack reservoirs and from a seismic perspective can be treated as one big, highly complex field.

In addition to size, Trinidad has roughly a six-month shooting season due to environmental, weather, and regulatory concerns. That meant that to cover the required area would take upward of 700 days, and that was too long. The area to be covered also had a lot of infrastructure that would make it extremely difficult to conduct a towed-streamer survey.

To get high-density, high-quality data within a reasonable time and at a reasonable cost, BP brought in the idea of marine ISS coverage.

BP used the same crews in Trinidad that had performed the North Sea tests, so the personnel were familiar with the process. For efficient contracting, BP entered a two-year agreement with WesternGeco for vessels in the North Sea over the summers of 2011 to 2013, and to use the same crews in Trinidad during the intervening winter months. Working continuously with the same two vessels, sharing learnings between the two regions, and increasing the length of cables used helped both operational and HSE performance. Compared to the first season, the second season increased acquisition efficiency by 45% with a corresponding reduction in cost per square kilometer of 22%.

In Trinidad's multiple stacked reservoirs, gas-saturated sand tends to slow sound transition, and the seismic waves tend to distort to the point that the shallow pay masks the deeper pay. The Columbus basin seismic survey was designed to progress resources and to discover new prospects in existing and future major gas fields. The 1,000-sq km (386-sq mi) high-density, 3D, ocean-bottom sensor program was the first commercial use of the ISS technology with seabed acquisition.

Editor's note: This article was the result of interviews with John Etgen, distinguished advisor for advanced seismic imaging, and Raymond Abma, senior research geophysicist, for BP.