SEISMIC INTERPRETATION - Seismic by ear: New interpretation modes with sonification

When exploring our everyday world we do not use sight only, but pick up information through all our senses. We instinctively make our decisions and actions based on multi-sensory information. Highly complex data analysis tasks, such as the interpretation of seismic data, should be made multi-modal in order to take advantage of these inherent capabilities.

Instead of crowding increasing amounts of information inside the strained bandwidth of visual perception, we advocate the use of an extended human processing bandwidth. Sound is the most natural extension of the familiar visual display. Visual and auditory information allow parallel cognitive processing and thereby a higher display dimensionality. The technical term for displaying information through sound is sonification. This article introduces the emerging field of auditory displays and covers:

- Motivation: Assets of auditory perception

- Explanation: Approaches to sonification of large data sets

- Application: Suggestions for use of sound in seismic interpretation

Auditory perception

A multi-modal display should take advantage of the particular assets of each sense. So, to design an effective auditory display we need a thorough knowledge of psychoacoustics and cognitive processes involved in listening. As a starting point, consider the way we use our ears.

In spatial perception, the visual sense is superior in both discrimination and precise localization. Audition is omni-directional and therefore well suited for spatial detection, but the resolution is relatively poor, particularly in the vertical direction. Similarly when we determine object properties such as size, shape or surface texture, we rely on vision. However, some internal properties - material, solidity, or mass - are attainable through the sounds produced when interacting with the object. This could be used, at least metaphorically, to attach sounds describing properties to interpreted seismic entities.

Sound is a temporal medium. Auditory perception is superior to vision when it comes to response time, acuity, and the ability to recognize temporal patterns. It allows temporal processing on several levels of time simultaneously. In music we can recognize high-level structure spanning several minutes, melodic and rhythmic variations in the order of seconds, and the subtle timbral nuances of a single instrument changing over a few milliseconds. All levels are perceived at the same time. This provides us with the capacity to detect periodicity, recurring patterns, and trends in data over a wide dynamic range.

Sudden changes in sound are easily picked up by the auditory system, and effectively draw attention to the causing event. Sounds turning on or off are especially noticeable. In an auditory display, important data values (such as zero phase or bright spots) can trigger singular sound events, making them easily detectable.

The ear is capable of handling several auditory streams at the same time. By using fine-tuned perceptual skills we can distinguish different streams in a complex auditory scene and follow them separately (as when shifting attention between different speakers at a party). In an auditory display, several seismic attributes can be monitored at the same time in addition to the one that is visualized. A carefully crafted display permits manipulations of perceptual cues related to the separability of each stream, allowing either a fusion, in which all streams are perceived as a single complex percept, or several easily separable streams.

The ear is effectively a frequency analyzer in combination with a neural pattern recognition system. Subtle changes in spectral content help identify important events or messages. This ability is robust under extremely varied conditions. We are even capable of separating the contributions of the sound source from the modulations made by the environment, making the latter available for perceptual processing as well. Some important assets of auditory perception are:

- Acute temporal resolution

- Flexible multi-dimensional perception (several streams, several levels)

- Fine-tuned pattern recognition (spectral and temporal).

When combining sense modalities one has to take care to avoid interference and resource conflicts. Auditory and visual processing seem to draw on different resources and therefore work well in parallel. The added benefits are higher display dimensionality, complementary information (spatial vs. temporal), and mutual confirmation. It is also well known that adding high-quality sound to virtual reality has a significant effect on the sense of presence and on perceived visual quality. Sound is engaging and enhances learning.

Sonification approaches

A very straightforward and intuitive approach to sonification of seismic data is to play the seismic trace as it is. Seismic data obey the same physical wave equation as sound. The trace is very low-frequency, most well below 100 Hz. Human hearing encompasses more than nine octaves (20 Hz - 20 kHz). Only the five octaves from 150 Hz - 5 kHz are considered useful for sonification purposes (by comparison color vision spans less than an octave). The frequency range of the seismic trace should be shifted higher into the range for human listeners.

There are several reports of listening to frequency-shifted seismic data in planetary seismic. By playing back long records of data at high speed, the observer can recognize the sound signatures of earthquakes and nuclear explosions in a time-efficient way. For exploratory seismic the situation is different. The records are short, so frequency shifts must be combined with time stretching. This will necessarily influence signal quality. Trace playback might be a useful tool when processing seismic data, but a more elaborate approach must be taken for seismic interpretation.

Current computer tools for seismic visualization and interpretation draw on the state of the art of computer graphics and human-computer interface technology. A similarly broad approach should be taken when designing auditory displays.

Exploring large seismic data sets involves two main tasks: Orientation (finding interesting regions of data) and analysis (detailed investigation of that region). We must decide what features to investigate - structures, textures, seismic attributes, bright spots, etc. - and on their distribution level - single point, local area, or larger section.

After deciding on what information to display, we must ensure that the interpreter receives the intended infor-mation. We presuppose a transformation from data variables to sound parameters. From psychoacoustics, we learn what audible parameters to use, their characteristics, and limitations. Pitch is the most salient. The classical sonification scheme maps a data variable to the pitch of a musical instrument.

Other interesting sound parameters are loudness, pulse rate (for a pulsing sound), and modulation. Several data variables can be represented by the parameters of a single sound. The perceptual interaction of parameters and of sounds must be carefully handled to avoid masking of information and to alleviate stream segregation. This is not a trivial task.

An alternative approach, ecological acoustics, focuses on the way we listen in the real world. Sounds are perceived as physical interactions between objects. They communicate the properties of objects, events and their surroundings. Available parameters are therefore physical and object-related: pipe thickness, pipe length, and impact force to describe the sound of hitting a metal pipe. Our hearing system is naturally adapted to process this kind of information efficiently. The sounds and parameters used should have an associative connection with the data they represent.

From music theory and music psychology we learn how to organize the auditory display in multiple streams and structural levels. Typically, we would let large data structures correspond to high-level sound structures in the auditory display. Computer music research has generated several models for controlling timbre through data-driven sound synthesis, allowing us to apply parameterized imitations of musical and everyday sounds in our sonifications.

Spatial sound makes it possible to localize virtual sound sources in the 3D space around the listener using loudspeakers or headphones. One should be careful to rely on sound localization as an information carrier in itself due to the low spatial resolution, but it supports rapid, omni-directional detection and can aid orientation in large data sets. Spatially distributed sound sources are easier to separate and increase the realism of a virtual display. Adding reverberation to distant sounds can provide a sense of scale or room size.

Finally, sound design in general is important. The sounds must not be annoying or tiring in long-term use, or else the display is quickly turned off. All in all, the design of an auditory display is a complicated, multi-disciplinary task. The purpose is to optimize the information flow to the interpreter, without presupposing a higher competence in music and sound on his/her behalf. As with all other tools, training is crucial for an effective utilization.

One particular problem arises when we attempt to sonify seismic data sets: The data sets are spatial, while sound is temporal. In order to exploit the temporal precision of audition we must make trajectories through the seismic data set, either interactively (by mouse) or along predefined objects such as seismic horizons or well paths. Playing along the trajectory gives the data a temporal dimension in which patterns emerge and significant data values stand out.

Interaction is an essential element in auditory displays. The author has developed a model of a virtual "listener" moving along paths in the data set. At each location sounds are generated representing different local features of data, from attribute values to more complex textural and structural properties. Whenever the interpreter detects an interesting phenomenon, he/she can leave a visual marker at the location or record a short path to be retraced later.

This model supports orientation and search in large complex data sets. The process could be automated, traversing the data set according to a predefined algorithm, but allowing the interpreter to pause, backtrack, and mark along the way. Alternatively, the interpreter selects specific search criteria and lets the traversal algorithm work in the background. Locations satisfying the criteria are marked and alerted with a spatialized sound.

The model can be taken one step further by using sounds that are physically related to the data domain. The virtual listener "walks" through data as in a subterranean cave. The sound of his footsteps is directly related to the local texture, giving stratigraphic information. Predefined objects are sonified as irregular drops of fluid with material properties reflecting characteristics of the object and presented in a virtual 3D sound space to aid localization.

Small subsets of data could be investigated using sounds characteristic of our interaction with mineral substances, such as knocking and scraping, parameterized to reflect properties of the data at hand. In addition to the conveyance of infor-mation such a display will exploit our affective response to sound, providing increased enthusiasm and engagement to the interpretation process

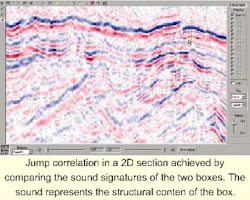

We expect sonification to be introduced into exploratory seismic through specialized tools tailor-made for specific applications. The VRGeo consortium has demonstrated a tool for sonification of multiple attributes in well logs. A group at the University of Houston experimented with sonification of 2D flow fields. This author has had positive response on a sound tool used for structural correlation. A small box is moved through data and the main structures inside are mapped into a musical chord. We are currently working on a 3D extension of this technique using a combination of spectral (harmonic) and temporal patterns.

A number of other applications have been suggested:

- Navigational aid in well planning (sonify curvature or channel position)

- Editing support for fault interpretation (quick examination of attributes)

- Interpreting stratigraphy and seismic texture (complex sound signatures carrying several statistical and spectral parameters)

- Sonifying fluid flow in 4D reservoir data.

We expect sonification to be a natural part of the seismic interpretation process in the future. Currently, we need a breakthrough application to demonstrate its potential to a larger user community. The author would be happy to discuss possible uses of sound in seismic interpretation and to provide additional information to the interested reader. ;

Author

Sigurd Saue has a background in acoustics and music technology.

Suggested applications

- Sonify patterns changing in time: In spatial data temporality can be introduced through movement and interactivity.

- Sonify several simultaneous objects/attributes: When the task is to detect anomalies or deviations (faults or channels), a simultaneous presentation of many attributes can be very effective.

- Sonify complex, multidimensional patterns: Textural and/or structural features could be mapped into tonal compounds (chords, timbres) stimulating our spectral pattern recognition.

- Sonify what we cannot see: Use sound for quick examination of additional attributes. Sound can fill in information in the transparent parts of a 3D data cube.

- Give operational feedback: In virtual reality and 3D visualization systems, there often is a lack of feedback telling the operator that contact is made or that he/she is grasping the object. Subtle sound cues could alleviate this.