Data management in a multi-platform age

Clash of storage requirements calls for long-term approach

Philip Neri

Paradigm

Energy companies generate digital information at an unprecedented pace at all stages of exploration and production. Different stages of that life cycle benefit from varying degrees of structured data management, but there is no single solution that encompasses all the data from all sources and for all disciplines. As the many parties that manage and consume data come to realize, the one-size-fits-all comprehensive data repository will never materialize. Alternate strategies based on modern data federation and interoperability attract increasing interest as a realistic way to support operational efficiency.

Multi-platform

The definition of an IT or infrastructure platform normally includes only the combination of an operating system and a hardware standard. In this day and age in oil and gas, that means mostly Windows on Intel architecture and Linux on Intel architecture. In effect, additional separation occurs based on the actual data structures used by the subsurface, engineering, field, and back-office applications.

Subsurface systems

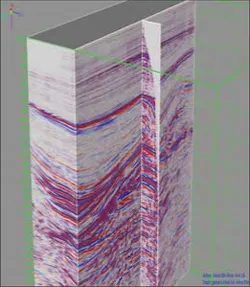

The massive amounts of data generated by seismic surveys require a high level of structured storage strategies to remain traceable and to be accessible to an increasing number of stakeholders. Gone are the days when the petabytes of field data remained in the confines of the mega-data processing centers (typically running Linux clusters augmented by graphic card processors); nowadays interpreters need to access the field information even as they look at the stack-migrated datasets that are only a few hundred gigabytes in size.

For monolithic data management systems this creates disconnects where interpretation operates on Windows-based systems. Subsurface scientific applications also rely on direct access to all well measurements, which on a regional basis can number many hundreds of thousands of boreholes each with many logs, cores, samples, etc. So it is no surprise that software suites used in subsurface exploration, delineation, and production monitoring are highly relational, offer powerful search capabilities, and for the more evolved ones also deliver cross-platform interoperability including browser-based navigation and selection tools. In most cases, subsurface activity is in areas where production activity already is on-going. This requires access to existing production data, which typically resides in other data systems.

Engineering systems

Engineering software covers many activities from the planning of the development of a field using modeling and simulation technologies, to the software operated mostly by contractors for construction and operation. While modeling and simulation are often built on the same or similar infrastructure as subsurface solutions, data remains structured and manageable. As one ventures into the realm of engineering, structured systems yield center stage to ad hoc solutions built around Microsoft Excel, pivot tables, and other mainstream generic software products. Data management is, therefore, almost impossible, beyond disaster recovery and data backup.

Field systems

Modern drilling facilities carry a lot of computer resources, much of it operating in proprietary environments managed by the many contracting companies performing the logging, mud-logging, rig operation, and other tasks that are part of the life on the rig. Real-time information is transmitted off-site using industry standard protocols such as WITS-ML. In recent years, the instrumentation of both drilling systems and to a much larger extent production facilities has resulted in a massive generation of data. The software systems to screen and monitor this avalanche of information are still in their early years of development and deployment.

Back-office systems by nature will operate on top of a highly structured platform, built on process software from major providers. The need to sustain audits tracing back over the years, the complex accounting of cost sharing, and the need for some degree of transparency among partners and for compliance with many regulations all point toward well-structured data systems.

A connected environment

Activity integration is a driving process for most energy companies, mirroring the accelerating pace of energy projects while keeping an eye on an ever-changing business environment and increasingly complex hydrocarbon plays. Assembly-line workflows (in which each discipline performs its designated task and hands on the result to the next one) has given way to integrated, team-based workflows that have multiple disciplines tackling a problem together, and iterating through certain steps to converge on an ideal outcome or to explore multiple scenarios. This trend motivates a lot of the data integration initiatives, e.g. in subsurface systems. As the drive for more efficiencies and further risk reduction continues, it is set to expand in scope.

Assembly-line work does not require integration, and can operate smoothly as long as data hand-over is streamlined. Team-oriented work demands integrated data management, including sophisticated user role management and data ownership tracking, to allow each user to safely add value to the outcome without interfering in other team member roles.

Limits of large systems

In theory, one could envision a seamless single data management system inclusive of all data from all aspects of the business, but many things make that unrealistic. Furthermore, this would entail that all data already exist in some form of a structured environment.

The reality of large footprint systems, i.e. systems that span many disciplines or even different departments within an organization, is that while they may improve the efficiency of a specific asset, group of assets, or regional entity, their size and the complexity of their management will result in one company having a number of these systems with the separation no longer along the lines of technical disciplines or departments but along lines separating different geographical businesses. While each entity becomes more effective through its vertical integration, trans-regional or multi-asset studies become more difficult, or will require data duplication across the lateral boundaries of the different verticals.

A different approach

Data federation is an alternate data strategy deployed in many domains, not the least in subsurface data management. The need to manage concurrently data that is in a high-production seismic data processing center (mostly on Linux), while using models and interpretation information from geoscientists working on Linux or Windows workstations, and pushing results into an engineering environment that operates mostly using Windows software is a challenge that can be addressed with distributed, cross-platform systems. The client-server architecture links applications to a number of different repositories, each in a different physical location (data center, workstation data servers, individual workstations, or laptops) and each with its own data management specifics. Seismic data requires highly optimized data streaming as well as random access to extremely large data volumes; interpretation data needs high levels of security and the real-time preservation of editing activity; well data requires optimized structures for random search and filtering on the fly of millions of small records; and modeling data must support real-time updates of many related and highly complex objects using transforms.

A federated client-server architecture allows each data type to be hosted and structured in the most appropriate way, while giving the appearance of a unified collection of data. The architecture is open to the addition of new data objects, including those from other platforms, and thus avoids data duplication without requiring a migration of existing data to a new system. Among other benefits, this greatly facilitates group data review.

Tools for oversight

Regardless of the data system structure, the reality is that within a given company there will be more than one system, and in many instances more than one system of a particular type, either to accommodate a multi-vendor environment, or because each different business unit has its own system. Over the life of an energy company's data repositories, and with the evolution of data systems in each department over the years, even a single system will contain invalid, erroneous, or duplicate data objects. This has spurred the need to develop tools that give the high-level view of what data is stored where, as well as allowing for a drill-down to specific data objects to locate and analyze data duplication, multiple versions of a particular data object, labeling errors, and other common issues.

The future

It is increasingly clear to data management experts that no single solution can address the needs of on-line, active-use data systems and at the same time provide for the perennial availability of older data items that, while not in active use, may be needed for future reference. Companies typically retain data and in some cases reprocess it up to 10 years. In that time, a given system will have gone through a number of format changes. It is not uncommon that over such a time span a new vendor may have replaced the incumbent for specific parts of the application portfolio. Archiving strategies that retain data in application-specific formats are well suited to short –term re-use scenarios and disaster recovery, but for longer-term archiving it is necessary to build a vendor-neutral environment in which data is stored in industry-standard formats (e.g. LAS for well data) so it can be retrieved by any future data system without the penalties of data structure upgrades that are the norm for access to application-specific data archives that have been on a shelf for a number of years. Industry standards exist for many types of data. If none is available, structured data tables with ample metadata will be easier to access and reload than a proprietary data structure.

Closing review

Data management and cross-disciplinary integration are crucial to increase the efficiencies of all aspects of oil and gas exploration, production, and administration. Data is generated in unprecedented volumes, while the business environment has become more demanding at all stages of an energy asset life cycle. Innovation is key to solving new challenges and to redefining the paradigms that govern this critical activity. At stake is the ability for a competitive organization to extract more information, materialize more opportunities, and make the best use of cost efficiencies to remain ahead of the game.

Offshore Articles Archives

View Oil and Gas Articles on PennEnergy.com